url

stringlengths 58

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 72

75

| comments_url

stringlengths 67

70

| events_url

stringlengths 65

68

| html_url

stringlengths 48

51

| id

int64 600M

2.19B

| node_id

stringlengths 18

24

| number

int64 2

6.73k

| title

stringlengths 1

290

| user

dict | labels

listlengths 0

4

| state

stringclasses 2

values | locked

bool 1

class | assignee

dict | assignees

listlengths 0

4

| milestone

dict | comments

listlengths 0

30

| created_at

timestamp[s] | updated_at

timestamp[s] | closed_at

timestamp[s] | author_association

stringclasses 3

values | active_lock_reason

null | draft

null | pull_request

null | body

stringlengths 0

228k

⌀ | reactions

dict | timeline_url

stringlengths 67

70

| performed_via_github_app

null | state_reason

stringclasses 3

values |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/5523

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5523/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5523/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5523/events

|

https://github.com/huggingface/datasets/issues/5523

| 1,580,193,015 |

I_kwDODunzps5eL9T3

| 5,523 |

Checking that split name is correct happens only after the data is downloaded

|

{

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

}

|

[

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

}

] |

open

| false |

{

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

}

|

[

{

"login": "polinaeterna",

"id": 16348744,

"node_id": "MDQ6VXNlcjE2MzQ4NzQ0",

"avatar_url": "https://avatars.githubusercontent.com/u/16348744?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/polinaeterna",

"html_url": "https://github.com/polinaeterna",

"followers_url": "https://api.github.com/users/polinaeterna/followers",

"following_url": "https://api.github.com/users/polinaeterna/following{/other_user}",

"gists_url": "https://api.github.com/users/polinaeterna/gists{/gist_id}",

"starred_url": "https://api.github.com/users/polinaeterna/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/polinaeterna/subscriptions",

"organizations_url": "https://api.github.com/users/polinaeterna/orgs",

"repos_url": "https://api.github.com/users/polinaeterna/repos",

"events_url": "https://api.github.com/users/polinaeterna/events{/privacy}",

"received_events_url": "https://api.github.com/users/polinaeterna/received_events",

"type": "User",

"site_admin": false

}

] | null |

[] | 2023-02-10T19:13:03 | 2023-02-10T19:14:50 | null |

CONTRIBUTOR

| null | null | null |

### Describe the bug

Verification of split names (=indexing data by split) happens after downloading the data. So when the split name is incorrect, users learn about that only after the data is fully downloaded, for large datasets it might take a lot of time.

### Steps to reproduce the bug

Load any dataset with random split name, for example:

```python

from datasets import load_dataset

load_dataset("mozilla-foundation/common_voice_11_0", "en", split="blabla")

```

and the download will start smoothly, despite there is no split named "blabla".

### Expected behavior

Raise error when split name is incorrect.

### Environment info

`datasets==2.9.1.dev0`

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5523/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5523/timeline

| null | null |

https://api.github.com/repos/huggingface/datasets/issues/5520

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5520/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5520/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5520/events

|

https://github.com/huggingface/datasets/issues/5520

| 1,578,417,074 |

I_kwDODunzps5eFLuy

| 5,520 |

ClassLabel.cast_storage raises TypeError when called on an empty IntegerArray

|

{

"login": "marioga",

"id": 6591505,

"node_id": "MDQ6VXNlcjY1OTE1MDU=",

"avatar_url": "https://avatars.githubusercontent.com/u/6591505?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/marioga",

"html_url": "https://github.com/marioga",

"followers_url": "https://api.github.com/users/marioga/followers",

"following_url": "https://api.github.com/users/marioga/following{/other_user}",

"gists_url": "https://api.github.com/users/marioga/gists{/gist_id}",

"starred_url": "https://api.github.com/users/marioga/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/marioga/subscriptions",

"organizations_url": "https://api.github.com/users/marioga/orgs",

"repos_url": "https://api.github.com/users/marioga/repos",

"events_url": "https://api.github.com/users/marioga/events{/privacy}",

"received_events_url": "https://api.github.com/users/marioga/received_events",

"type": "User",

"site_admin": false

}

|

[] |

closed

| false | null |

[] | null |

[] | 2023-02-09T18:46:52 | 2023-02-12T11:17:18 | 2023-02-12T11:17:18 |

CONTRIBUTOR

| null | null | null |

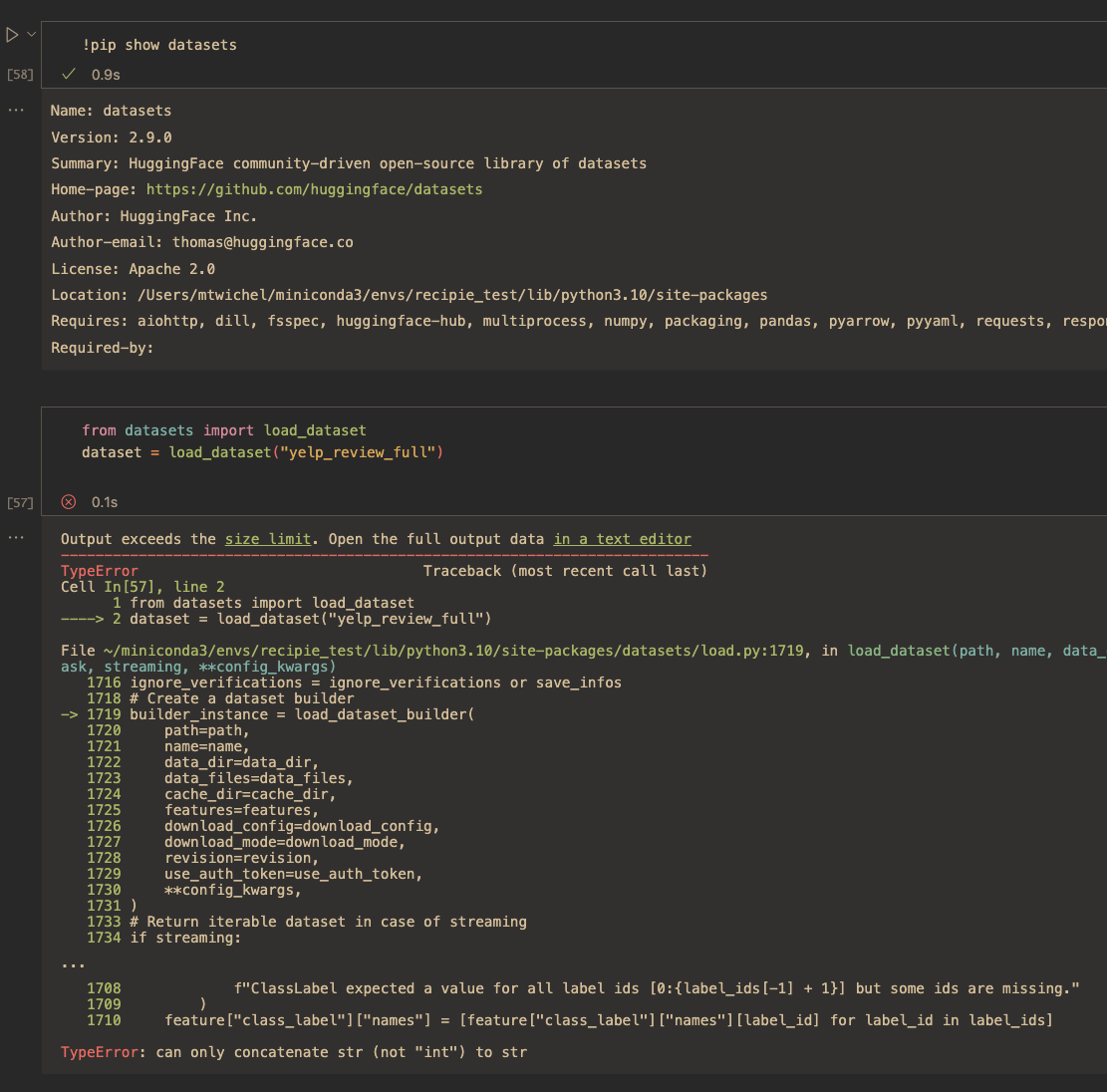

### Describe the bug

`ClassLabel.cast_storage` raises `TypeError` when called on an empty `IntegerArray`.

### Steps to reproduce the bug

Minimal steps:

```python

import pyarrow as pa

from datasets import ClassLabel

ClassLabel(names=['foo', 'bar']).cast_storage(pa.array([], pa.int64()))

```

In practice, this bug arises in situations like the one below:

```python

from datasets import ClassLabel, Dataset, Features, Sequence

dataset = Dataset.from_dict({'labels': [[], []]}, features=Features({'labels': Sequence(ClassLabel(names=['foo', 'bar']))}))

# this raises TypeError

dataset.map(batched=True, batch_size=1)

```

### Expected behavior

`ClassLabel.cast_storage` should return an empty Int64Array.

### Environment info

- `datasets` version: 2.9.1.dev0

- Platform: Linux-4.15.0-1032-aws-x86_64-with-glibc2.27

- Python version: 3.10.6

- PyArrow version: 11.0.0

- Pandas version: 1.5.3

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5520/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5520/timeline

| null |

completed

|

https://api.github.com/repos/huggingface/datasets/issues/5517

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5517/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5517/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5517/events

|

https://github.com/huggingface/datasets/issues/5517

| 1,577,976,608 |

I_kwDODunzps5eDgMg

| 5,517 |

`with_format("numpy")` silently downcasts float64 to float32 features

|

{

"login": "ernestum",

"id": 1250234,

"node_id": "MDQ6VXNlcjEyNTAyMzQ=",

"avatar_url": "https://avatars.githubusercontent.com/u/1250234?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/ernestum",

"html_url": "https://github.com/ernestum",

"followers_url": "https://api.github.com/users/ernestum/followers",

"following_url": "https://api.github.com/users/ernestum/following{/other_user}",

"gists_url": "https://api.github.com/users/ernestum/gists{/gist_id}",

"starred_url": "https://api.github.com/users/ernestum/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/ernestum/subscriptions",

"organizations_url": "https://api.github.com/users/ernestum/orgs",

"repos_url": "https://api.github.com/users/ernestum/repos",

"events_url": "https://api.github.com/users/ernestum/events{/privacy}",

"received_events_url": "https://api.github.com/users/ernestum/received_events",

"type": "User",

"site_admin": false

}

|

[] |

open

| false | null |

[] |

{

"url": "https://api.github.com/repos/huggingface/datasets/milestones/10",

"html_url": "https://github.com/huggingface/datasets/milestone/10",

"labels_url": "https://api.github.com/repos/huggingface/datasets/milestones/10/labels",

"id": 9038583,

"node_id": "MI_kwDODunzps4Aier3",

"number": 10,

"title": "3.0",

"description": "Next major release",

"creator": {

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

},

"open_issues": 4,

"closed_issues": 0,

"state": "open",

"created_at": "2023-02-13T16:22:42",

"updated_at": "2023-09-22T14:07:52",

"due_on": null,

"closed_at": null

}

|

[

"Hi! This behavior stems from these lines:\r\n\r\nhttps://github.com/huggingface/datasets/blob/b065547654efa0ec633cf373ac1512884c68b2e1/src/datasets/formatting/np_formatter.py#L45-L46\r\n\r\nI agree we should preserve the original type whenever possible and downcast explicitly with a warning.\r\n\r\n@lhoestq Do you remember why we need this \"default dtype\" logic in our formatters?",

"I was also wondering why the default type logic is needed. Me just deleting it is probably too naive of a solution.",

"Hmm I think the idea was to end up with the usual default precision for deep learning models - no matter how the data was stored or where it comes from.\r\n\r\nFor example in NLP we store tokens using an optimized low precision to save disk space, but when we set the format to `torch` we actually need to get `int64`. Although the need for a default for integers also comes from numpy not returning the same integer precision depending on your machine. Finally I guess we added a default for floats as well for consistency.\r\n\r\nI'm a bit embarrassed by this though, as a user I'd have expected to get the same precision indeed as well and get a zero copy view.",

"Will you fix this or should I open a PR?",

"Unfortunately removing it for integers is a breaking change for most `transformers` + `datasets` users for NLP (which is a common case). Removing it for floats is a breaking change for `transformers` + `datasets` for ASR as well. And it also is a breaking change for the other users relying on this behavior.\r\n\r\nTherefore I think that the only short term solution is for the user to provide `dtype=` manually and document better this behavior. We could also extend `dtype` to accept a value that means \"return the same dtype as the underlying storage\" and make it easier to do zero copy.",

"@lhoestq It should be fine to remove this conversion in Datasets 3.0, no? For now, we can warn the user (with a log message) about the future change when the default type is changed.",

"Let's see with the transformers team if it sounds reasonable ? We'd have to fix multiple example scripts though.\r\n\r\nIf it's not ok we can also explore keeping this behavior only for tokens and audio data.",

"IMO being coupled with Transformers can lead to unexpected behavior when one tries to use our lib without pairing it with Transformers, so I think it's still important to \"fix\" this, even if it means we will need to update Transformers' example scripts afterward.\r\n",

"Ideally let's update the `transformers` example scripts before the change :P",

"For others that run into the same issue: A temporary workaround for me is this:\r\n```python\r\ndef numpy_transform(batch):\r\n return {key: np.asarray(val) for key, val in batch.items()}\r\n\r\ndataset = dataset.with_transform(numpy_transform)\r\n```",

"This behavior (silent upcast from `int32` to `int64`) is also unexpected for the user in https://discuss.huggingface.co/t/standard-getitem-returns-wrong-data-type-for-arrays/62470/2",

"Hi, I stumbled on a variation that upcasts uint8 to int64. I would expect the dtype to be the same as it was when I generated the dataset.\r\n\r\n```\r\nimport numpy as np\r\nimport datasets as ds\r\n\r\nfoo = np.random.randint(0, 256, size=(5, 10, 10), dtype=np.uint8)\r\n\r\nfeatures = ds.Features({\"foo\": ds.Array2D((10, 10), \"uint8\")})\r\ndataset = ds.Dataset.from_dict({\"foo\": foo}, features=features)\r\ndataset.set_format(\"torch\")\r\nprint(\"feature dtype:\", dataset.features[\"foo\"].dtype)\r\nprint(\"array dtype:\", dataset[\"foo\"].dtype)\r\n\r\n# feature dtype: uint8\r\n# array dtype: torch.int64\r\n```\r\n",

"workaround to remove torch upcasting\r\n\r\n```\r\nimport datasets as ds\r\nimport torch\r\n\r\nclass FixedTorchFormatter(ds.formatting.TorchFormatter):\r\n def _tensorize(self, value):\r\n return torch.from_numpy(value)\r\n\r\n\r\nds.formatting._register_formatter(FixedTorchFormatter, \"torch\")\r\n```"

] | 2023-02-09T14:18:00 | 2024-01-18T08:42:17 | null |

NONE

| null | null | null |

### Describe the bug

When I create a dataset with a `float64` feature, then apply numpy formatting the returned numpy arrays are silently downcasted to `float32`.

### Steps to reproduce the bug

```python

import datasets

dataset = datasets.Dataset.from_dict({'a': [1.0, 2.0, 3.0]}).with_format("numpy")

print("feature dtype:", dataset.features['a'].dtype)

print("array dtype:", dataset['a'].dtype)

```

output:

```

feature dtype: float64

array dtype: float32

```

### Expected behavior

```

feature dtype: float64

array dtype: float64

```

### Environment info

- `datasets` version: 2.8.0

- Platform: Linux-5.4.0-135-generic-x86_64-with-glibc2.29

- Python version: 3.8.10

- PyArrow version: 10.0.1

- Pandas version: 1.4.4

### Suggested Fix

Changing [the `_tensorize` function of the numpy formatter](https://github.com/huggingface/datasets/blob/b065547654efa0ec633cf373ac1512884c68b2e1/src/datasets/formatting/np_formatter.py#L32) to

```python

def _tensorize(self, value):

if isinstance(value, (str, bytes, type(None))):

return value

elif isinstance(value, (np.character, np.ndarray)) and np.issubdtype(value.dtype, np.character):

return value

elif isinstance(value, np.number):

return value

return np.asarray(value, **self.np_array_kwargs)

```

fixes this particular issue for me. Not sure if this would break other tests. This should also avoid unnecessary copying of the array.

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5517/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5517/timeline

| null | null |

https://api.github.com/repos/huggingface/datasets/issues/5514

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5514/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5514/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5514/events

|

https://github.com/huggingface/datasets/issues/5514

| 1,576,453,837 |

I_kwDODunzps5d9sbN

| 5,514 |

Improve inconsistency of `Dataset.map` interface for `load_from_cache_file`

|

{

"login": "HallerPatrick",

"id": 22773355,

"node_id": "MDQ6VXNlcjIyNzczMzU1",

"avatar_url": "https://avatars.githubusercontent.com/u/22773355?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/HallerPatrick",

"html_url": "https://github.com/HallerPatrick",

"followers_url": "https://api.github.com/users/HallerPatrick/followers",

"following_url": "https://api.github.com/users/HallerPatrick/following{/other_user}",

"gists_url": "https://api.github.com/users/HallerPatrick/gists{/gist_id}",

"starred_url": "https://api.github.com/users/HallerPatrick/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/HallerPatrick/subscriptions",

"organizations_url": "https://api.github.com/users/HallerPatrick/orgs",

"repos_url": "https://api.github.com/users/HallerPatrick/repos",

"events_url": "https://api.github.com/users/HallerPatrick/events{/privacy}",

"received_events_url": "https://api.github.com/users/HallerPatrick/received_events",

"type": "User",

"site_admin": false

}

|

[

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] |

closed

| false | null |

[] | null |

[

"Hi, thanks for noticing this! We can't just remove the cache control as this allows us to control where the arrow files generated by the ops are written (cached on disk if enabled or a temporary directory if disabled). The right way to address this inconsistency would be by having `load_from_cache_file=None` by default everywhere.",

"Hi! Yes, this seems more plausible. I can implement that. One last thing is the type annotation `load_from_cache_file: bool = None`. Which I then would change to `load_from_cache_file: Optional[bool] = None`.",

"PR #5515 ",

"Yes, `Optional[bool]` is the correct type annotation and thanks for the PR."

] | 2023-02-08T16:40:44 | 2023-02-14T14:26:44 | 2023-02-14T14:26:44 |

CONTRIBUTOR

| null | null | null |

### Feature request

1. Replace the `load_from_cache_file` default value to `True`.

2. Remove or alter checks from `is_caching_enabled` logic.

### Motivation

I stumbled over an inconsistency in the `Dataset.map` interface. The documentation (and source) states for the parameter `load_from_cache_file`:

```

load_from_cache_file (`bool`, defaults to `True` if caching is enabled):

If a cache file storing the current computation from `function`

can be identified, use it instead of recomputing.

```

1. `load_from_cache_file` default value is `None`, while being annotated as `bool`

2. It is inconsistent with other method signatures like `filter`, that have the default value `True`

3. The logic is inconsistent, as the `map` method checks if caching is enabled through `is_caching_enabled`. This logic is not used for other similar methods.

### Your contribution

I am not fully aware of the logic behind caching checks. If this is just a inconsistency that historically grew, I would suggest to remove the `is_caching_enabled` logic as the "default" logic. Maybe someone can give insights, if environment variables have a higher priority than local variables or vice versa.

If this is clarified, I could adjust the source according to the "Feature request" section of this issue.

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5514/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5514/timeline

| null |

completed

|

https://api.github.com/repos/huggingface/datasets/issues/5513

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5513/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5513/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5513/events

|

https://github.com/huggingface/datasets/issues/5513

| 1,576,300,803 |

I_kwDODunzps5d9HED

| 5,513 |

Some functions use a param named `type` shouldn't that be avoided since it's a Python reserved name?

|

{

"login": "alvarobartt",

"id": 36760800,

"node_id": "MDQ6VXNlcjM2NzYwODAw",

"avatar_url": "https://avatars.githubusercontent.com/u/36760800?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/alvarobartt",

"html_url": "https://github.com/alvarobartt",

"followers_url": "https://api.github.com/users/alvarobartt/followers",

"following_url": "https://api.github.com/users/alvarobartt/following{/other_user}",

"gists_url": "https://api.github.com/users/alvarobartt/gists{/gist_id}",

"starred_url": "https://api.github.com/users/alvarobartt/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/alvarobartt/subscriptions",

"organizations_url": "https://api.github.com/users/alvarobartt/orgs",

"repos_url": "https://api.github.com/users/alvarobartt/repos",

"events_url": "https://api.github.com/users/alvarobartt/events{/privacy}",

"received_events_url": "https://api.github.com/users/alvarobartt/received_events",

"type": "User",

"site_admin": false

}

|

[] |

closed

| false | null |

[] | null |

[

"Hi! Let's not do this - renaming it would be a breaking change, and going through the deprecation cycle is only worth it if it improves user experience.",

"Hi @mariosasko, ok it makes sense. Anyway, don't you think it's worth it at some point to start a deprecation cycle e.g. `fs` in `load_from_disk`? It doesn't affect user experience but it's for sure a bad practice IMO, but's up to you 😄 Feel free to close this issue otherwise!",

"I don't think deprecating a param name in this particular instance is worth the hassle, so I'm closing the issue 🙂.",

"Sure, makes sense @mariosasko thanks!"

] | 2023-02-08T15:13:46 | 2023-07-24T16:02:18 | 2023-07-24T14:27:59 |

CONTRIBUTOR

| null | null | null |

Hi @mariosasko, @lhoestq, or whoever reads this! :)

After going through `ArrowDataset.set_format` I found out that the `type` param is actually named `type` which is a Python reserved name as you may already know, shouldn't that be renamed to `format_type` before the 3.0.0 is released?

Just wanted to get your input, and if applicable, tackle this issue myself! Thanks 🤗

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5513/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5513/timeline

| null |

completed

|

https://api.github.com/repos/huggingface/datasets/issues/5511

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5511/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5511/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5511/events

|

https://github.com/huggingface/datasets/issues/5511

| 1,575,851,768 |

I_kwDODunzps5d7Zb4

| 5,511 |

Creating a dummy dataset from a bigger one

|

{

"login": "patrickvonplaten",

"id": 23423619,

"node_id": "MDQ6VXNlcjIzNDIzNjE5",

"avatar_url": "https://avatars.githubusercontent.com/u/23423619?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/patrickvonplaten",

"html_url": "https://github.com/patrickvonplaten",

"followers_url": "https://api.github.com/users/patrickvonplaten/followers",

"following_url": "https://api.github.com/users/patrickvonplaten/following{/other_user}",

"gists_url": "https://api.github.com/users/patrickvonplaten/gists{/gist_id}",

"starred_url": "https://api.github.com/users/patrickvonplaten/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/patrickvonplaten/subscriptions",

"organizations_url": "https://api.github.com/users/patrickvonplaten/orgs",

"repos_url": "https://api.github.com/users/patrickvonplaten/repos",

"events_url": "https://api.github.com/users/patrickvonplaten/events{/privacy}",

"received_events_url": "https://api.github.com/users/patrickvonplaten/received_events",

"type": "User",

"site_admin": false

}

|

[] |

closed

| false | null |

[] | null |

[

"Update `datasets` or downgrade `huggingface-hub` ;)\r\n\r\nThe `huggingface-hub` lib did a breaking change a few months ago, and you're using an old version of `datasets` that does't support it",

"Awesome thanks a lot! Everything works just fine with `datasets==2.9.0` :-) ",

"Getting same error with latest versions.\r\n\r\n\r\n```shell\r\n---------------------------------------------------------------------------\r\nTypeError Traceback (most recent call last)\r\nCell In[99], line 1\r\n----> 1 dataset.push_to_hub(\"mirfan899/kids_phoneme_asr\")\r\n\r\nFile /opt/conda/lib/python3.10/site-packages/datasets/arrow_dataset.py:3538, in Dataset.push_to_hub(self, repo_id, split, private, token, branch, shard_size, embed_external_files)\r\n 3493 def push_to_hub(\r\n 3494 self,\r\n 3495 repo_id: str,\r\n (...)\r\n 3501 embed_external_files: bool = True,\r\n 3502 ):\r\n 3503 \"\"\"Pushes the dataset to the hub.\r\n 3504 The dataset is pushed using HTTP requests and does not need to have neither git or git-lfs installed.\r\n 3505 \r\n (...)\r\n 3536 ```\r\n 3537 \"\"\"\r\n-> 3538 repo_id, split, uploaded_size, dataset_nbytes = self._push_parquet_shards_to_hub(\r\n 3539 repo_id=repo_id,\r\n 3540 split=split,\r\n 3541 private=private,\r\n 3542 token=token,\r\n 3543 branch=branch,\r\n 3544 shard_size=shard_size,\r\n 3545 embed_external_files=embed_external_files,\r\n 3546 )\r\n 3547 organization, dataset_name = repo_id.split(\"/\")\r\n 3548 info_to_dump = self.info.copy()\r\n\r\nFile /opt/conda/lib/python3.10/site-packages/datasets/arrow_dataset.py:3474, in Dataset._push_parquet_shards_to_hub(self, repo_id, split, private, token, branch, shard_size, embed_external_files)\r\n 3472 shard.to_parquet(buffer)\r\n 3473 uploaded_size += buffer.tell()\r\n-> 3474 _retry(\r\n 3475 api.upload_file,\r\n 3476 func_kwargs=dict(\r\n 3477 path_or_fileobj=buffer.getvalue(),\r\n 3478 path_in_repo=path_in_repo(index),\r\n 3479 repo_id=repo_id,\r\n 3480 token=token,\r\n 3481 repo_type=\"dataset\",\r\n 3482 revision=branch,\r\n 3483 identical_ok=True,\r\n 3484 ),\r\n 3485 exceptions=HTTPError,\r\n 3486 status_codes=[504],\r\n 3487 base_wait_time=2.0,\r\n 3488 max_retries=5,\r\n 3489 max_wait_time=20.0,\r\n 3490 )\r\n 3491 return repo_id, split, uploaded_size, dataset_nbytes\r\n\r\nFile /opt/conda/lib/python3.10/site-packages/datasets/utils/file_utils.py:330, in _retry(func, func_args, func_kwargs, exceptions, status_codes, max_retries, base_wait_time, max_wait_time)\r\n 328 while True:\r\n 329 try:\r\n--> 330 return func(*func_args, **func_kwargs)\r\n 331 except exceptions as err:\r\n 332 if retry >= max_retries or (status_codes and err.response.status_code not in status_codes):\r\n\r\nFile /opt/conda/lib/python3.10/site-packages/huggingface_hub/utils/_validators.py:120, in validate_hf_hub_args.<locals>._inner_fn(*args, **kwargs)\r\n 117 if check_use_auth_token:\r\n 118 kwargs = smoothly_deprecate_use_auth_token(fn_name=fn.__name__, has_token=has_token, kwargs=kwargs)\r\n--> 120 return fn(*args, **kwargs)\r\n\r\nTypeError: HfApi.upload_file() got an unexpected keyword argument 'identical_ok'\r\n```",

"Feel free to update `datasets` and `huggingface-hub`, it should fix it :)",

"I went ahead and upgraded both datasets and hub and still getting the same error\r\n",

"Which version do you have ? It's been a while since it has been fixed",

"huggingface 0.0.1\r\nhuggingface-hub 0.17.1\r\ndatasets 2.14.5\r\n\r\nstill has the issue!!",

"I face the same issue even after upgrading :/"

] | 2023-02-08T10:18:41 | 2023-12-28T18:21:01 | 2023-02-08T10:35:48 |

CONTRIBUTOR

| null | null | null |

### Describe the bug

I often want to create a dummy dataset from a bigger dataset for fast iteration when training. However, I'm having a hard time doing this especially when trying to upload the dataset to the Hub.

### Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("lambdalabs/pokemon-blip-captions")

dataset["train"] = dataset["train"].select(range(20))

dataset.push_to_hub("patrickvonplaten/dummy_image_data")

```

gives:

```

~/python_bin/datasets/arrow_dataset.py in _push_parquet_shards_to_hub(self, repo_id, split, private, token, branch, max_shard_size, embed_external_files)

4003 base_wait_time=2.0,

4004 max_retries=5,

-> 4005 max_wait_time=20.0,

4006 )

4007 return repo_id, split, uploaded_size, dataset_nbytes

~/python_bin/datasets/utils/file_utils.py in _retry(func, func_args, func_kwargs, exceptions, status_codes, max_retries, base_wait_time, max_wait_time)

328 while True:

329 try:

--> 330 return func(*func_args, **func_kwargs)

331 except exceptions as err:

332 if retry >= max_retries or (status_codes and err.response.status_code not in status_codes):

~/hf/lib/python3.7/site-packages/huggingface_hub/utils/_validators.py in _inner_fn(*args, **kwargs)

122 )

123

--> 124 return fn(*args, **kwargs)

125

126 return _inner_fn # type: ignore

TypeError: upload_file() got an unexpected keyword argument 'identical_ok'

In [2]:

```

### Expected behavior

I would have expected this to work. It's for me the most intuitive way of creating a dummy dataset.

### Environment info

```

- `datasets` version: 2.1.1.dev0

- Platform: Linux-4.19.0-22-cloud-amd64-x86_64-with-debian-10.13

- Python version: 3.7.3

- PyArrow version: 11.0.0

- Pandas version: 1.3.5

```

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5511/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5511/timeline

| null |

completed

|

https://api.github.com/repos/huggingface/datasets/issues/5508

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5508/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5508/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5508/events

|

https://github.com/huggingface/datasets/issues/5508

| 1,573,290,359 |

I_kwDODunzps5dxoF3

| 5,508 |

Saving a dataset after setting format to torch doesn't work, but only if filtering

|

{

"login": "joebhakim",

"id": 13984157,

"node_id": "MDQ6VXNlcjEzOTg0MTU3",

"avatar_url": "https://avatars.githubusercontent.com/u/13984157?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/joebhakim",

"html_url": "https://github.com/joebhakim",

"followers_url": "https://api.github.com/users/joebhakim/followers",

"following_url": "https://api.github.com/users/joebhakim/following{/other_user}",

"gists_url": "https://api.github.com/users/joebhakim/gists{/gist_id}",

"starred_url": "https://api.github.com/users/joebhakim/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/joebhakim/subscriptions",

"organizations_url": "https://api.github.com/users/joebhakim/orgs",

"repos_url": "https://api.github.com/users/joebhakim/repos",

"events_url": "https://api.github.com/users/joebhakim/events{/privacy}",

"received_events_url": "https://api.github.com/users/joebhakim/received_events",

"type": "User",

"site_admin": false

}

|

[] |

closed

| false | null |

[] | null |

[

"Hey, I'm a research engineer working on language modelling wanting to contribute to open source. I was wondering if I could give it a shot?",

"Hi! This issue was fixed in https://github.com/huggingface/datasets/pull/4972, so please install `datasets>=2.5.0` to avoid it."

] | 2023-02-06T21:08:58 | 2023-02-09T14:55:26 | 2023-02-09T14:55:26 |

NONE

| null | null | null |

### Describe the bug

Saving a dataset after setting format to torch doesn't work, but only if filtering

### Steps to reproduce the bug

```

a = Dataset.from_dict({"b": [1, 2]})

a.set_format('torch')

a.save_to_disk("test_save") # saves successfully

a.filter(None).save_to_disk("test_save_filter") # does not

>> [...] TypeError: Provided `function` which is applied to all elements of table returns a `dict` of types [<class 'torch.Tensor'>]. When using `batched=True`, make sure provided `function` returns a `dict` of types like `(<class 'list'>, <class 'numpy.ndarray'>)`.

# note: skipping the format change to torch lets this work.

### Expected behavior

Saving to work

### Environment info

- `datasets` version: 2.4.0

- Platform: Linux-6.1.9-arch1-1-x86_64-with-glibc2.36

- Python version: 3.10.9

- PyArrow version: 9.0.0

- Pandas version: 1.4.4

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5508/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5508/timeline

| null |

completed

|

https://api.github.com/repos/huggingface/datasets/issues/5507

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5507/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5507/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5507/events

|

https://github.com/huggingface/datasets/issues/5507

| 1,572,667,036 |

I_kwDODunzps5dvP6c

| 5,507 |

Optimise behaviour in respect to indices mapping

|

{

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

}

|

[

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] |

open

| false |

{

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

}

|

[

{

"login": "mariosasko",

"id": 47462742,

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/mariosasko",

"html_url": "https://github.com/mariosasko",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"type": "User",

"site_admin": false

}

] | null |

[] | 2023-02-06T14:25:55 | 2023-02-28T18:19:18 | null |

CONTRIBUTOR

| null | null | null |

_Originally [posted](https://huggingface.slack.com/archives/C02V51Q3800/p1675443873878489?thread_ts=1675418893.373479&cid=C02V51Q3800) on Slack_

Considering all this, perhaps for Datasets 3.0, we can do the following:

* [ ] have `continuous=True` by default in `.shard` (requested in the survey and makes more sense for us since it doesn't create an indices mapping)

* [x] allow calling `save_to_disk` on "unflattened" datasets

* [ ] remove "hidden" expensive calls in `save_to_disk`, `unique`, `concatenate_datasets`, etc. For instance, instead of silently calling `flatten_indices` where it's needed, it's probably better to be explicit (considering how expensive these ops can be) and raise an error instead

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5507/reactions",

"total_count": 1,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 1,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5507/timeline

| null | null |

https://api.github.com/repos/huggingface/datasets/issues/5506

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5506/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5506/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5506/events

|

https://github.com/huggingface/datasets/issues/5506

| 1,571,838,641 |

I_kwDODunzps5dsFqx

| 5,506 |

IterableDataset and Dataset return different batch sizes when using Trainer with multiple GPUs

|

{

"login": "kheyer",

"id": 38166299,

"node_id": "MDQ6VXNlcjM4MTY2Mjk5",

"avatar_url": "https://avatars.githubusercontent.com/u/38166299?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/kheyer",

"html_url": "https://github.com/kheyer",

"followers_url": "https://api.github.com/users/kheyer/followers",

"following_url": "https://api.github.com/users/kheyer/following{/other_user}",

"gists_url": "https://api.github.com/users/kheyer/gists{/gist_id}",

"starred_url": "https://api.github.com/users/kheyer/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/kheyer/subscriptions",

"organizations_url": "https://api.github.com/users/kheyer/orgs",

"repos_url": "https://api.github.com/users/kheyer/repos",

"events_url": "https://api.github.com/users/kheyer/events{/privacy}",

"received_events_url": "https://api.github.com/users/kheyer/received_events",

"type": "User",

"site_admin": false

}

|

[] |

closed

| false | null |

[] | null |

[

"Hi ! `datasets` doesn't do batching - the PyTorch DataLoader does and is created by the `Trainer`. Do you pass other arguments to training_args with respect to data loading ?\r\n\r\nAlso we recently released `.to_iterable_dataset` that does pretty much what you implemented, but using contiguous shards to get a better speed:\r\n```python\r\nif use_iterable_dataset:\r\n num_shards = 100\r\n dataset = dataset.to_iterable_dataset(num_shards=num_shards)\r\n```",

"This is the full set of training args passed. No training args were changed when switching dataset types.\r\n\r\n```python\r\ntraining_args = TrainingArguments(\r\n output_dir=\"./checkpoints\",\r\n overwrite_output_dir=True,\r\n num_train_epochs=1,\r\n per_device_train_batch_size=256,\r\n save_steps=2000,\r\n save_total_limit=4,\r\n prediction_loss_only=True,\r\n report_to='none',\r\n gradient_accumulation_steps=6,\r\n fp16=True,\r\n max_steps=60000,\r\n lr_scheduler_type='linear',\r\n warmup_ratio=0.1,\r\n logging_steps=100,\r\n weight_decay=0.01,\r\n adam_beta1=0.9,\r\n adam_beta2=0.98,\r\n adam_epsilon=1e-6,\r\n learning_rate=1e-4\r\n)\r\n```",

"I think the issue comes from `transformers`: https://github.com/huggingface/transformers/issues/21444",

"Makes sense. Given that it's a `transformers` issue and already being tracked, I'll close this out."

] | 2023-02-06T03:26:03 | 2023-02-08T18:30:08 | 2023-02-08T18:30:07 |

NONE

| null | null | null |

### Describe the bug

I am training a Roberta model using 2 GPUs and the `Trainer` API with a batch size of 256.

Initially I used a standard `Dataset`, but had issues with slow data loading. After reading [this issue](https://github.com/huggingface/datasets/issues/2252), I swapped to loading my dataset as contiguous shards and passing those to an `IterableDataset`. I observed an unexpected drop in GPU memory utilization, and found the batch size returned from the model had been cut in half.

When using `Trainer` with 2 GPUs and a batch size of 256, `Dataset` returns a batch of size 512 (256 per GPU), while `IterableDataset` returns a batch size of 256 (256 total). My guess is `IterableDataset` isn't accounting for multiple cards.

### Steps to reproduce the bug

```python

import datasets

from datasets import IterableDataset

from transformers import RobertaConfig

from transformers import RobertaTokenizerFast

from transformers import RobertaForMaskedLM

from transformers import DataCollatorForLanguageModeling

from transformers import Trainer, TrainingArguments

use_iterable_dataset = True

def gen_from_shards(shards):

for shard in shards:

for example in shard:

yield example

dataset = datasets.load_from_disk('my_dataset.hf')

if use_iterable_dataset:

n_shards = 100

shards = [dataset.shard(num_shards=n_shards, index=i) for i in range(n_shards)]

dataset = IterableDataset.from_generator(gen_from_shards, gen_kwargs={"shards": shards})

tokenizer = RobertaTokenizerFast.from_pretrained("./my_tokenizer", max_len=160, use_fast=True)

config = RobertaConfig(

vocab_size=8248,

max_position_embeddings=256,

num_attention_heads=8,

num_hidden_layers=6,

type_vocab_size=1)

model = RobertaForMaskedLM(config=config)

data_collator = DataCollatorForLanguageModeling(tokenizer=tokenizer, mlm=True, mlm_probability=0.15)

training_args = TrainingArguments(

per_device_train_batch_size=256

# other args removed for brevity

)

trainer = Trainer(

model=model,

args=training_args,

data_collator=data_collator,

train_dataset=dataset,

)

trainer.train()

```

### Expected behavior

Expected `Dataset` and `IterableDataset` to have the same batch size behavior. If the current behavior is intentional, the batch size printout at the start of training should be updated. Currently, both dataset classes result in `Trainer` printing the same total batch size, even though the batch size sent to the GPUs are different.

### Environment info

datasets 2.7.1

transformers 4.25.1

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5506/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5506/timeline

| null |

completed

|

https://api.github.com/repos/huggingface/datasets/issues/5505

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5505/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5505/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5505/events

|

https://github.com/huggingface/datasets/issues/5505

| 1,571,720,814 |

I_kwDODunzps5dro5u

| 5,505 |

PyTorch BatchSampler still loads from Dataset one-by-one

|

{

"login": "davidgilbertson",

"id": 4443482,

"node_id": "MDQ6VXNlcjQ0NDM0ODI=",

"avatar_url": "https://avatars.githubusercontent.com/u/4443482?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/davidgilbertson",

"html_url": "https://github.com/davidgilbertson",

"followers_url": "https://api.github.com/users/davidgilbertson/followers",

"following_url": "https://api.github.com/users/davidgilbertson/following{/other_user}",

"gists_url": "https://api.github.com/users/davidgilbertson/gists{/gist_id}",

"starred_url": "https://api.github.com/users/davidgilbertson/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/davidgilbertson/subscriptions",

"organizations_url": "https://api.github.com/users/davidgilbertson/orgs",

"repos_url": "https://api.github.com/users/davidgilbertson/repos",

"events_url": "https://api.github.com/users/davidgilbertson/events{/privacy}",

"received_events_url": "https://api.github.com/users/davidgilbertson/received_events",

"type": "User",

"site_admin": false

}

|

[] |

closed

| false | null |

[] | null |

[

"This change seems to come from a few months ago in the PyTorch side. That's good news and it means we may not need to pass a batch_sampler as soon as we add `Dataset.__getitems__` to get the optimal speed :)\r\n\r\nThanks for reporting ! Would you like to open a PR to add `__getitems__` and remove this outdated documentation ?",

"Yeah I figured this was the sort of thing that probably once worked. I can confirm that you no longer need the batch sampler, just `batch_size=n` in the `DataLoader`.\r\n\r\nI'll pass on the PR, I'm flat out right now, sorry."

] | 2023-02-06T01:14:55 | 2023-02-19T18:27:30 | 2023-02-19T18:27:30 |

NONE

| null | null | null |

### Describe the bug

In [the docs here](https://huggingface.co/docs/datasets/use_with_pytorch#use-a-batchsampler), it mentions the issue of the Dataset being read one-by-one, then states that using a BatchSampler resolves the issue.

I'm not sure if this is a mistake in the docs or the code, but it seems that the only way for a Dataset to be passed a list of indexes by PyTorch (instead of one index at a time) is to define a `__getitems__` method (note the plural) on the Dataset object, and since the HF Dataset doesn't have this, PyTorch executes [this line of code](https://github.com/pytorch/pytorch/blob/master/torch/utils/data/_utils/fetch.py#L58), reverting to fetching one-by-one.

### Steps to reproduce the bug

You can put a breakpoint in `Dataset.__getitem__()` or just print the args from there and see that it's called multiple times for a single `next(iter(dataloader))`, even when using the code from the docs:

```py

from torch.utils.data.sampler import BatchSampler, RandomSampler

batch_sampler = BatchSampler(RandomSampler(ds), batch_size=32, drop_last=False)

dataloader = DataLoader(ds, batch_sampler=batch_sampler)

```

### Expected behavior

The expected behaviour would be for it to fetch batches from the dataset, rather than one-by-one.

To demonstrate that there is room for improvement: once I have a HF dataset `ds`, if I just add this line:

```py

ds.__getitems__ = ds.__getitem__

```

...then the time taken to loop over the dataset improves considerably (for wikitext-103, from one minute to 13 seconds with batch size 32). Probably not a big deal in the grand scheme of things, but seems like an easy win.

### Environment info

- `datasets` version: 2.9.0

- Platform: Linux-5.10.102.1-microsoft-standard-WSL2-x86_64-with-glibc2.31

- Python version: 3.10.8

- PyArrow version: 10.0.1

- Pandas version: 1.5.3

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5505/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5505/timeline

| null |

completed

|

https://api.github.com/repos/huggingface/datasets/issues/5500

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5500/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5500/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5500/events

|

https://github.com/huggingface/datasets/issues/5500

| 1,569,257,240 |

I_kwDODunzps5diPcY

| 5,500 |

WMT19 custom download checksum error

|

{

"login": "Hannibal046",

"id": 38466901,

"node_id": "MDQ6VXNlcjM4NDY2OTAx",

"avatar_url": "https://avatars.githubusercontent.com/u/38466901?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/Hannibal046",

"html_url": "https://github.com/Hannibal046",

"followers_url": "https://api.github.com/users/Hannibal046/followers",

"following_url": "https://api.github.com/users/Hannibal046/following{/other_user}",

"gists_url": "https://api.github.com/users/Hannibal046/gists{/gist_id}",

"starred_url": "https://api.github.com/users/Hannibal046/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Hannibal046/subscriptions",

"organizations_url": "https://api.github.com/users/Hannibal046/orgs",

"repos_url": "https://api.github.com/users/Hannibal046/repos",

"events_url": "https://api.github.com/users/Hannibal046/events{/privacy}",

"received_events_url": "https://api.github.com/users/Hannibal046/received_events",

"type": "User",

"site_admin": false

}

|

[] |

closed

| false | null |

[] | null |

[

"I update the `datatsets` version and it works."

] | 2023-02-03T05:45:37 | 2023-02-03T05:52:56 | 2023-02-03T05:52:56 |

NONE

| null | null | null |

### Describe the bug

I use the following scripts to download data from WMT19:

```python

import datasets

from datasets import inspect_dataset, load_dataset_builder

from wmt19.wmt_utils import _TRAIN_SUBSETS,_DEV_SUBSETS

## this is a must due to: https://discuss.huggingface.co/t/load-dataset-hangs-with-local-files/28034/3

if __name__ == '__main__':

dev_subsets,train_subsets = [],[]

for subset in _TRAIN_SUBSETS:

if subset.target=='en' and 'de' in subset.sources:

train_subsets.append(subset.name)

for subset in _DEV_SUBSETS:

if subset.target=='en' and 'de' in subset.sources:

dev_subsets.append(subset.name)

inspect_dataset("wmt19", "./wmt19")

builder = load_dataset_builder(

"./wmt19/wmt_utils.py",

language_pair=("de", "en"),

subsets={

datasets.Split.TRAIN: train_subsets,

datasets.Split.VALIDATION: dev_subsets,

},

)

builder.download_and_prepare()

ds = builder.as_dataset()

ds.to_json("../data/wmt19/ende/data.json")

```

And I got the following error:

```

Traceback (most recent call last): | 0/2 [00:00<?, ?obj/s]

File "draft.py", line 26, in <module>

builder.download_and_prepare() | 0/1 [00:00<?, ?obj/s]

File "/Users/hannibal046/anaconda3/lib/python3.8/site-packages/datasets/builder.py", line 605, in download_and_prepare

self._download_and_prepare(%| | 0/1 [00:00<?, ?obj/s]

File "/Users/hannibal046/anaconda3/lib/python3.8/site-packages/datasets/builder.py", line 1104, in _download_and_prepare

super()._download_and_prepare(dl_manager, verify_infos, check_duplicate_keys=verify_infos) | 0/1 [00:00<?, ?obj/s]

File "/Users/hannibal046/anaconda3/lib/python3.8/site-packages/datasets/builder.py", line 676, in _download_and_prepare

verify_checksums(s #13: 0%| | 0/1 [00:00<?, ?obj/s]

File "/Users/hannibal046/anaconda3/lib/python3.8/site-packages/datasets/utils/info_utils.py", line 35, in verify_checksums

raise UnexpectedDownloadedFile(str(set(recorded_checksums) - set(expected_checksums))) | 0/1 [00:00<?, ?obj/s]

datasets.utils.info_utils.UnexpectedDownloadedFile: {'https://s3.amazonaws.com/web-language-models/paracrawl/release1/paracrawl-release1.en-de.zipporah0-dedup-clean.tgz', 'https://huggingface.co/datasets/wmt/wmt13/resolve/main-zip/training-parallel-europarl-v7.zip', 'https://huggingface.co/datasets/wmt/wmt18/resolve/main-zip/translation-task/rapid2016.zip', 'https://huggingface.co/datasets/wmt/wmt18/resolve/main-zip/translation-task/training-parallel-nc-v13.zip', 'https://huggingface.co/datasets/wmt/wmt17/resolve/main-zip/translation-task/training-parallel-nc-v12.zip', 'https://huggingface.co/datasets/wmt/wmt14/resolve/main-zip/training-parallel-nc-v9.zip', 'https://huggingface.co/datasets/wmt/wmt15/resolve/main-zip/training-parallel-nc-v10.zip', 'https://huggingface.co/datasets/wmt/wmt16/resolve/main-zip/translation-task/training-parallel-nc-v11.zip'}

```

### Steps to reproduce the bug

see above

### Expected behavior

download data successfully

### Environment info

datasets==2.1.0

python==3.8

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5500/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5500/timeline

| null |

completed

|

https://api.github.com/repos/huggingface/datasets/issues/5499

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5499/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5499/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5499/events

|

https://github.com/huggingface/datasets/issues/5499

| 1,568,937,026 |

I_kwDODunzps5dhBRC

| 5,499 |

`load_dataset` has ~4 seconds of overhead for cached data

|

{

"login": "davidgilbertson",

"id": 4443482,

"node_id": "MDQ6VXNlcjQ0NDM0ODI=",

"avatar_url": "https://avatars.githubusercontent.com/u/4443482?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/davidgilbertson",

"html_url": "https://github.com/davidgilbertson",

"followers_url": "https://api.github.com/users/davidgilbertson/followers",

"following_url": "https://api.github.com/users/davidgilbertson/following{/other_user}",

"gists_url": "https://api.github.com/users/davidgilbertson/gists{/gist_id}",

"starred_url": "https://api.github.com/users/davidgilbertson/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/davidgilbertson/subscriptions",

"organizations_url": "https://api.github.com/users/davidgilbertson/orgs",

"repos_url": "https://api.github.com/users/davidgilbertson/repos",

"events_url": "https://api.github.com/users/davidgilbertson/events{/privacy}",

"received_events_url": "https://api.github.com/users/davidgilbertson/received_events",

"type": "User",

"site_admin": false

}

|

[

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] |

open

| false | null |

[] | null |

[

"Hi ! To skip the verification step that checks if newer data exist, you can enable offline mode with `HF_DATASETS_OFFLINE=1`.\r\n\r\nAlthough I agree this step should be much faster for datasets hosted on the HF Hub - we could just compare the commit hash from the local data and the remote git repository. We're not been leveraging the git commit hashes, since the library was built before we even had git repositories for each dataset on HF.",

"Thanks @lhoestq, for memory when I recorded those times I had `HF_DATASETS_OFFLINE` set."

] | 2023-02-02T23:34:50 | 2023-02-07T19:35:11 | null |

NONE

| null | null | null |

### Feature request

When loading a dataset that has been cached locally, the `load_dataset` function takes a lot longer than it should take to fetch the dataset from disk (or memory).

This is particularly noticeable for smaller datasets. For example, wikitext-2, comparing `load_data` (once cached) and `load_from_disk`, the `load_dataset` method takes 40 times longer.

⏱ 4.84s ⮜ load_dataset

⏱ 119ms ⮜ load_from_disk

### Motivation

I assume this is doing something like checking for a newer version.

If so, that's an age old problem: do you make the user wait _every single time they load from cache_ or do you do something like load from cache always, _then_ check for a newer version and alert if they have stale data. The decision usually revolves around what percentage of the time the data will have been updated, and how dangerous old data is.

For most datasets it's extremely unlikely that there will be a newer version on any given run, so 99% of the time this is just wasted time.

Maybe you don't want to make that decision for all users, but at least having the _option_ to not wait for checks would be an improvement.

### Your contribution

.

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5499/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5499/timeline

| null | null |

https://api.github.com/repos/huggingface/datasets/issues/5498

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5498/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5498/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5498/events

|

https://github.com/huggingface/datasets/issues/5498

| 1,568,190,529 |

I_kwDODunzps5deLBB

| 5,498 |

TypeError: 'bool' object is not iterable when filtering a datasets.arrow_dataset.Dataset

|

{

"login": "vmuel",

"id": 91255010,

"node_id": "MDQ6VXNlcjkxMjU1MDEw",

"avatar_url": "https://avatars.githubusercontent.com/u/91255010?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/vmuel",

"html_url": "https://github.com/vmuel",

"followers_url": "https://api.github.com/users/vmuel/followers",

"following_url": "https://api.github.com/users/vmuel/following{/other_user}",

"gists_url": "https://api.github.com/users/vmuel/gists{/gist_id}",

"starred_url": "https://api.github.com/users/vmuel/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/vmuel/subscriptions",

"organizations_url": "https://api.github.com/users/vmuel/orgs",

"repos_url": "https://api.github.com/users/vmuel/repos",

"events_url": "https://api.github.com/users/vmuel/events{/privacy}",

"received_events_url": "https://api.github.com/users/vmuel/received_events",

"type": "User",

"site_admin": false

}

|

[] |

closed

| false | null |

[] | null |

[

"Hi! Instead of a single boolean, your filter function should return an iterable (of booleans) in the batched mode like so:\r\n```python\r\ntrain_dataset = train_dataset.filter(\r\n function=lambda batch: [image is not None for image in batch[\"image\"]], \r\n batched=True,\r\n batch_size=10)\r\n```\r\n\r\nPS: You can make this operation much faster by operating directly on the arrow data to skip the decoding part:\r\n```python\r\ntrain_dataset = train_dataset.with_format(\"arrow\")\r\ntrain_dataset = train_dataset.filter(\r\n function=lambda table: table[\"image\"].is_valid().to_pylist(), \r\n batched=True,\r\n batch_size=100)\r\ntrain_dataset = train_dataset.with_format(None)\r\n```",

"Thank a lot!",

"I hit the same issue and the error message isn't really clear on what's going wrong. It might be helpful to update the docs with a batched example."

] | 2023-02-02T14:46:49 | 2023-10-08T06:12:47 | 2023-02-04T17:19:36 |

NONE

| null | null | null |

### Describe the bug

Hi,

Thanks for the amazing work on the library!

**Describe the bug**

I think I might have noticed a small bug in the filter method.

Having loaded a dataset using `load_dataset`, when I try to filter out empty entries with `batched=True`, I get a TypeError.

### Steps to reproduce the bug

```

train_dataset = train_dataset.filter(

function=lambda example: example["image"] is not None,

batched=True,

batch_size=10)

```

Error message:

```

File .../lib/python3.9/site-packages/datasets/fingerprint.py:480, in fingerprint_transform.<locals>._fingerprint.<locals>.wrapper(*args, **kwargs)

476 validate_fingerprint(kwargs[fingerprint_name])

478 # Call actual function

--> 480 out = func(self, *args, **kwargs)

...

-> 5666 indices_array = [i for i, to_keep in zip(indices, mask) if to_keep]

5667 if indices_mapping is not None:

5668 indices_array = pa.array(indices_array, type=pa.uint64())

TypeError: 'bool' object is not iterable

```

**Removing batched=True allows to bypass the issue.**

### Expected behavior

According to the doc, "[batch_size corresponds to the] number of examples per batch provided to function if batched = True", so we shouldn't need to remove the batchd=True arg?

source: https://huggingface.co/docs/datasets/v2.9.0/en/package_reference/main_classes#datasets.Dataset.filter

### Environment info

- `datasets` version: 2.9.0

- Platform: Linux-5.4.0-122-generic-x86_64-with-glibc2.31

- Python version: 3.9.10

- PyArrow version: 10.0.1

- Pandas version: 1.5.3

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5498/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5498/timeline

| null |

completed

|

https://api.github.com/repos/huggingface/datasets/issues/5496

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5496/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5496/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5496/events

|

https://github.com/huggingface/datasets/issues/5496

| 1,567,301,765 |

I_kwDODunzps5dayCF

| 5,496 |

Add a `reduce` method

|

{

"login": "zhangir-azerbayev",

"id": 59542043,

"node_id": "MDQ6VXNlcjU5NTQyMDQz",

"avatar_url": "https://avatars.githubusercontent.com/u/59542043?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/zhangir-azerbayev",

"html_url": "https://github.com/zhangir-azerbayev",

"followers_url": "https://api.github.com/users/zhangir-azerbayev/followers",

"following_url": "https://api.github.com/users/zhangir-azerbayev/following{/other_user}",

"gists_url": "https://api.github.com/users/zhangir-azerbayev/gists{/gist_id}",

"starred_url": "https://api.github.com/users/zhangir-azerbayev/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/zhangir-azerbayev/subscriptions",

"organizations_url": "https://api.github.com/users/zhangir-azerbayev/orgs",

"repos_url": "https://api.github.com/users/zhangir-azerbayev/repos",

"events_url": "https://api.github.com/users/zhangir-azerbayev/events{/privacy}",

"received_events_url": "https://api.github.com/users/zhangir-azerbayev/received_events",

"type": "User",

"site_admin": false

}

|

[

{

"id": 1935892871,

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement",

"name": "enhancement",

"color": "a2eeef",

"default": true,

"description": "New feature or request"

}

] |

closed

| false | null |

[] | null |

[

"Hi! Sure, feel free to open a PR, so we can see the API you have in mind.",

"I would like to give it a go! #self-assign",

"Closing as `Dataset.map` can be used instead (see https://github.com/huggingface/datasets/pull/5533#issuecomment-1440571658 and https://github.com/huggingface/datasets/pull/5533#issuecomment-1446403263)"

] | 2023-02-02T04:30:22 | 2023-07-21T14:24:32 | 2023-07-21T14:24:32 |

NONE

| null | null | null |

### Feature request

Right now the `Dataset` class implements `map()` and `filter()`, but leaves out the third functional idiom popular among Python users: `reduce`.

### Motivation

A `reduce` method is often useful when calculating dataset statistics, for example, the occurrence of a particular n-gram or the average line length of a code dataset.

### Your contribution

I haven't contributed to `datasets` before, but I don't expect this will be too difficult, since the implementation will closely follow that of `map` and `filter`. I could have a crack over the weekend.

|

{

"url": "https://api.github.com/repos/huggingface/datasets/issues/5496/reactions",

"total_count": 0,

"+1": 0,

"-1": 0,

"laugh": 0,

"hooray": 0,

"confused": 0,

"heart": 0,

"rocket": 0,

"eyes": 0

}

|

https://api.github.com/repos/huggingface/datasets/issues/5496/timeline

| null |

completed

|

https://api.github.com/repos/huggingface/datasets/issues/5495

|

https://api.github.com/repos/huggingface/datasets

|

https://api.github.com/repos/huggingface/datasets/issues/5495/labels{/name}

|

https://api.github.com/repos/huggingface/datasets/issues/5495/comments

|

https://api.github.com/repos/huggingface/datasets/issues/5495/events

|

https://github.com/huggingface/datasets/issues/5495

| 1,566,803,452 |

I_kwDODunzps5dY4X8

| 5,495 |

to_tf_dataset fails with datetime UTC columns even if not included in columns argument

|

{

"login": "dwyatte",

"id": 2512762,

"node_id": "MDQ6VXNlcjI1MTI3NjI=",

"avatar_url": "https://avatars.githubusercontent.com/u/2512762?v=4",

"gravatar_id": "",

"url": "https://api.github.com/users/dwyatte",

"html_url": "https://github.com/dwyatte",

"followers_url": "https://api.github.com/users/dwyatte/followers",

"following_url": "https://api.github.com/users/dwyatte/following{/other_user}",

"gists_url": "https://api.github.com/users/dwyatte/gists{/gist_id}",

"starred_url": "https://api.github.com/users/dwyatte/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/dwyatte/subscriptions",

"organizations_url": "https://api.github.com/users/dwyatte/orgs",

"repos_url": "https://api.github.com/users/dwyatte/repos",

"events_url": "https://api.github.com/users/dwyatte/events{/privacy}",

"received_events_url": "https://api.github.com/users/dwyatte/received_events",

"type": "User",

"site_admin": false

}

|

[

{

"id": 1935892857,

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug",

"name": "bug",

"color": "d73a4a",

"default": true,

"description": "Something isn't working"

},

{

"id": 1935892877,

"node_id": "MDU6TGFiZWwxOTM1ODkyODc3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/good%20first%20issue",

"name": "good first issue",

"color": "7057ff",

"default": true,

"description": "Good for newcomers"

}

] |

closed

| false | null |

[] | null |

[

"Hi! This is indeed a bug in our zero-copy logic.\r\n\r\nTo fix it, instead of the line:\r\nhttps://github.com/huggingface/datasets/blob/7cfac43b980ab9e4a69c2328f085770996323005/src/datasets/features/features.py#L702\r\n\r\nwe should have:\r\n```python\r\nreturn pa.types.is_primitive(pa_type) and not (pa.types.is_boolean(pa_type) or pa.types.is_temporal(pa_type))\r\n```",

"@mariosasko submitted a small PR [here](https://github.com/huggingface/datasets/pull/5504)"

] | 2023-02-01T20:47:33 | 2023-02-08T14:33:19 | 2023-02-08T14:33:19 |

CONTRIBUTOR

| null | null | null |

### Describe the bug

There appears to be some eager behavior in `to_tf_dataset` that runs against every column in a dataset even if they aren't included in the columns argument. This is problematic with datetime UTC columns due to them not working with zero copy. If I don't have UTC information in my datetime column, then everything works as expected.

### Steps to reproduce the bug

```python

import numpy as np

import pandas as pd

from datasets import Dataset

df = pd.DataFrame(np.random.rand(2, 1), columns=["x"])

# df["dt"] = pd.to_datetime(["2023-01-01", "2023-01-01"]) # works fine

df["dt"] = pd.to_datetime(["2023-01-01 00:00:00.00000+00:00", "2023-01-01 00:00:00.00000+00:00"])

df.to_parquet("test.pq")

ds = Dataset.from_parquet("test.pq")

tf_ds = ds.to_tf_dataset(columns=["x"], batch_size=2, shuffle=True)

```

```

ArrowInvalid Traceback (most recent call last)

Cell In[1], line 12

8 df.to_parquet("test.pq")

11 ds = Dataset.from_parquet("test.pq")

---> 12 tf_ds = ds.to_tf_dataset(columns=["r"], batch_size=2, shuffle=True)

File ~/venv/lib/python3.8/site-packages/datasets/arrow_dataset.py:411, in TensorflowDatasetMixin.to_tf_dataset(self, batch_size, columns, shuffle, collate_fn, drop_remainder, collate_fn_args, label_cols, prefetch, num_workers)

407 dataset = self

409 # TODO(Matt, QL): deprecate the retention of label_ids and label

--> 411 output_signature, columns_to_np_types = dataset._get_output_signature(

412 dataset,

413 collate_fn=collate_fn,

414 collate_fn_args=collate_fn_args,

415 cols_to_retain=cols_to_retain,

416 batch_size=batch_size if drop_remainder else None,

417 )

419 if "labels" in output_signature:

420 if ("label_ids" in columns or "label" in columns) and "labels" not in columns:

File ~/venv/lib/python3.8/site-packages/datasets/arrow_dataset.py:254, in TensorflowDatasetMixin._get_output_signature(dataset, collate_fn, collate_fn_args, cols_to_retain, batch_size, num_test_batches)

252 for _ in range(num_test_batches):

253 indices = sample(range(len(dataset)), test_batch_size)

--> 254 test_batch = dataset[indices]

255 if cols_to_retain is not None: