---

pipeline_tag: any-to-any

library_name: transformers

tags:

- text-to-image

- image-editing

- image-understanding

- vision-language

- multimodal

- autoregressive

- unified-model

license: mit

---

## 🌌 UniPic2-SD3.5M-Kontext-2B

## 📖 Introduction

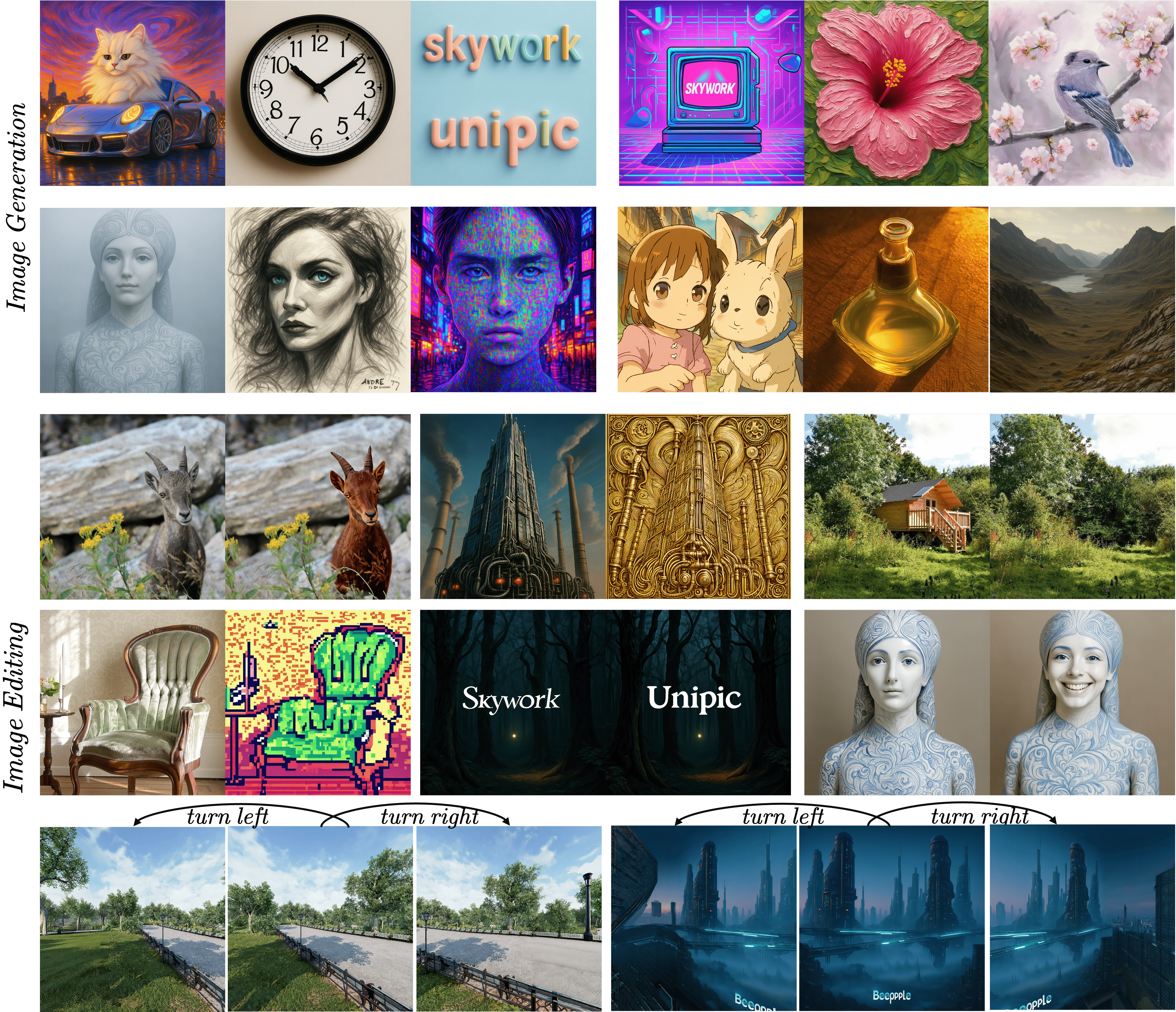

**UniPic2-SD3.5M-Kontext-2B** is a post-trained **T2I

** model built on the SD3.5-Medium. It focuses on text-to-image generation and image editing, delivering strong quality with a fast generation speed. It runs smoothly on a single 16 GB consumer GPU.

## 📊 Benchmarks

**UniPic2-SD3.5M-Kontext-2B** w/o GRPO achieves competitive results across a variety of vision-language tasks:

| Task | Score |

|--------------------|--------|

| 🧠 **GenEval** | 0.83 |

| 🖼️ **DPG-Bench** | 83.7 |

| ✂️ **GEditBench-EN** | 6.31 |

| 🧪 **ImgEdit-Bench** | 3.95 |

## 🧠 Usage

### 1. Clone the Repository

```bash

git clone https://github.com/SkyworkAI/UniPic

cd UniPic-2

```

### 2. Set Up the Environment

```bash

conda create -n unipic python=3.10

conda activate unipic

pip install -r requirements.txt

```

### 3.Text-to-Image Generation

```bash

import torch

from PIL import Image

from unipicv2.pipeline_stable_diffusion_3_kontext import StableDiffusion3KontextPipeline

from unipicv2.transformer_sd3_kontext import SD3Transformer2DKontextModel

from diffusers import FlowMatchEulerDiscreteScheduler, AutoencoderKL

from transformers import CLIPTextModelWithProjection, CLIPTokenizer, T5EncoderModel, T5TokenizerFast

# Load model components

pretrained_model_name_or_path = "Skywork/UniPic2-SD3.5M-Kontext-2B"

transformer = SD3Transformer2DKontextModel.from_pretrained(

pretrained_model_name_or_path, subfolder="transformer", torch_dtype=torch.bfloat16).cuda()

vae = AutoencoderKL.from_pretrained(

pretrained_model_name_or_path, subfolder="vae",

torch_dtype=torch.bfloat16, device_map="auto", low_cpu_mem_usage=True

).cuda()

# Load text encoders

text_encoder = CLIPTextModelWithProjection.from_pretrained(

pretrained_model_name_or_path, subfolder="text_encoder", torch_dtype=torch.bfloat16, device_map="auto", low_cpu_mem_usage=True

).cuda()

tokenizer = CLIPTokenizer.from_pretrained(pretrained_model_name_or_path, subfolder="tokenizer")

text_encoder_2 = CLIPTextModelWithProjection.from_pretrained(

pretrained_model_name_or_path, subfolder="text_encoder_2", torch_dtype=torch.bfloat16, device_map="auto", low_cpu_mem_usage=True

).cuda()

tokenizer_2 = CLIPTokenizer.from_pretrained(pretrained_model_name_or_path, subfolder="tokenizer_2")

text_encoder_3 = T5EncoderModel.from_pretrained(

pretrained_model_name_or_path, subfolder="text_encoder_3", torch_dtype=torch.bfloat16, device_map="auto", low_cpu_mem_usage=True

).cuda()

tokenizer_3 = T5TokenizerFast.from_pretrained(pretrained_model_name_or_path, subfolder="tokenizer_3")

scheduler = FlowMatchEulerDiscreteScheduler.from_pretrained(

pretrained_model_name_or_path, subfolder="scheduler"

)

# Create pipeline

pipeline = StableDiffusion3KontextPipeline(

transformer=transformer, vae=vae,

text_encoder=text_encoder, tokenizer=tokenizer,

text_encoder_2=text_encoder_2, tokenizer_2=tokenizer_2,

text_encoder_3=text_encoder_3, tokenizer_3=tokenizer_3,

scheduler=scheduler)

# Generate image

image = pipeline(

prompt='a pig with wings and a top hat flying over a happy futuristic scifi city',

negative_prompt='blurry, low quality, low resolution, distorted, deformed, broken content, missing parts, damaged details, artifacts, glitch, noise, pixelated, grainy, compression artifacts, bad composition, wrong proportion, incomplete editing, unfinished, unedited areas.',

height=512, width=384,

num_inference_steps=50,

guidance_scale=3.5,

generator=torch.Generator(device=transformer.device).manual_seed(42)

).images[0]

image.save("text2image.png")

```

### 4. Image Editing

```bash

# Load and preprocess image

def fix_longer_edge(x, image_size, factor=32):

w, h = x.size

if w >= h:

target_w = image_size

target_h = h * (target_w / w)

target_h = round(target_h / factor) * factor

else:

target_h = image_size

target_w = w * (target_h / h)

target_w = round(target_w / factor) * factor

x = x.resize(size=(target_w, target_h))

return x

image = Image.open("text2image.png")

image = fix_longer_edge(image, image_size=512)

negative_prompt = "blurry, low quality, low resolution, distorted, deformed, broken content, missing parts, damaged details, artifacts, glitch, noise, pixelated, grainy, compression artifacts, bad composition, wrong proportion, incomplete editing, unfinished, unedited areas."

# Edit image

edited_image = pipeline(

image=image,

prompt="remove the pig's hat",

negative_prompt=negative_prompt,

height=image.height, width=image.width,

num_inference_steps=50,

guidance_scale=3.5,

generator=torch.Generator(device=transformer.device).manual_seed(42)

).images[0]

edited_image.save("edited_img.png")

```

## 📄 License

This model is released under the MIT License.

## Citation

If you use Skywork-UniPic in your research, please cite:

```

@misc{wang2025skyworkunipicunifiedautoregressive,

title={Skywork UniPic: Unified Autoregressive Modeling for Visual Understanding and Generation},

author={Peiyu Wang and Yi Peng and Yimeng Gan and Liang Hu and Tianyidan Xie and Xiaokun Wang and Yichen Wei and Chuanxin Tang and Bo Zhu and Changshi Li and Hongyang Wei and Eric Li and Xuchen Song and Yang Liu and Yahui Zhou},

year={2025},

eprint={2508.03320},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2508.03320},

}

```